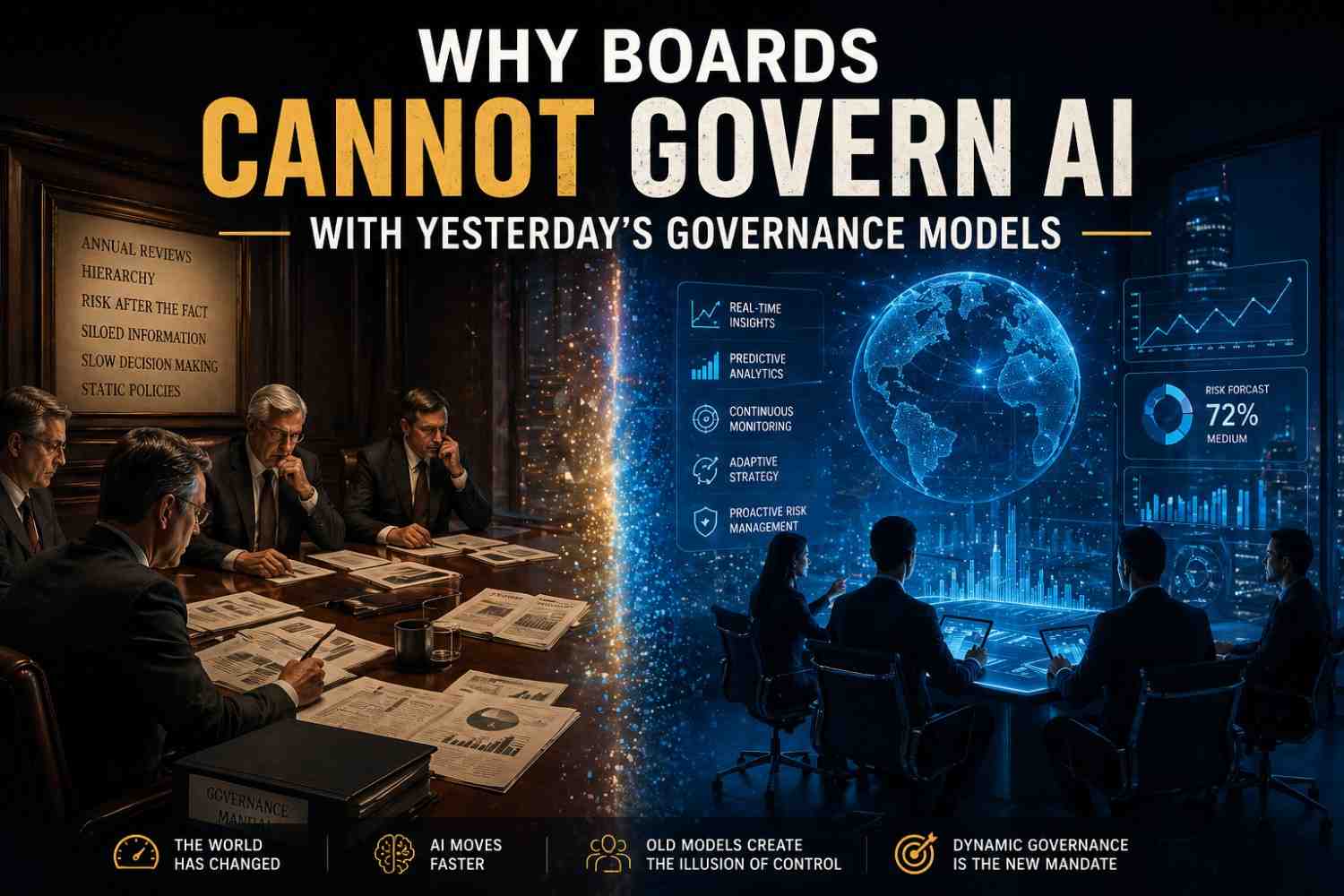

We are applying governance frameworks designed for slow-moving institutions to a technology that rewrites competitive landscapes in weeks. That gap — between institutional pace and AI velocity — is where strategic value is lost, and strategic risk accumulates.

Governance Was Built for a Slower World

Traditional governance systems were engineered for environments where oversight could move faster than disruption. That was a reasonable assumption — until it wasn’t.

Those systems were built on a set of foundational assumptions that are now breaking down one by one:

AI systems can now generate decisions in seconds, alter workflows rapidly, compress operating costs, and create strategic advantages — before some boards have scheduled their next committee review.

The Data Already Signals the Shift

Research-backed indicators boards cannot afford to ignore

The plain truth: AI is moving faster than institutional readiness.

The Checklist Industry Has Arrived

Whenever uncertainty rises, markets produce templates. So today, boards are swimming in AI governance checklists, ethics declarations, oversight scorecards, vendor risk forms, and readiness assessments.

Some of these have genuine value. But checklists become dangerous when they create the illusion of control. An organisation can tick every box:

- We formed an AI committee

- We approved a policy

- We assigned ownership

- We completed a risk review

- We benchmarked ourselves

And still fail to ask the questions that matter most. Compliance theatre is not governance. It is risk delayed, not risk managed.

Why Boards Struggle: The Human Factor

This is not only a governance problem. It is a human one. Leadership teams remain predictably vulnerable to cognitive biases that make strategic exposure invisible until it becomes a crisis.

The Questions Boards Should Actually Be Asking

Not compliance questions — stewardship and survival questions

Which revenue lines are most vulnerable to AI substitution within the next 18 months?

Which competitors can now outperform us with fewer people and significantly lower cost structures?

Which decisions must remain human by design — and what is our ethical and legal rationale for that boundary?

Where are we dependent on external AI platforms or foreign models in ways that create strategic vulnerability?

How do deepfakes, synthetic fraud, and AI-driven trust erosion affect our brand and operational resilience?

Which roles should be fundamentally redesigned rather than preserved — and what does that require from leadership?

Can our management team adapt faster than the market shifts? What evidence supports that belief?

Which assumptions in our current strategy are already obsolete — and who is responsible for identifying them?

AI Sovereignty: Framed Too Narrowly

“AI sovereignty” has become a widely used phrase — but it is frequently reduced to a narrow set of infrastructure decisions: local hosting, national models, domestic data storage, policy announcements, symbolic projects.

Those elements matter. But true AI sovereignty is a layered capability that spans the entire strategic stack:

If a nation or enterprise cannot build, adapt, negotiate, and respond at speed — sovereignty becomes branding rather than strength.

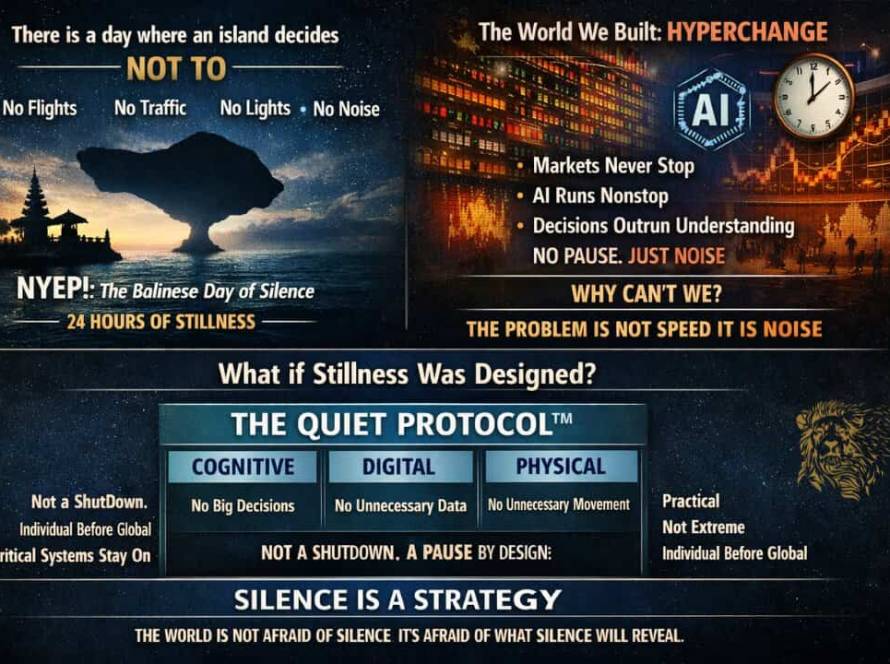

The New Governance Model Must Be Dynamic

Quarterly oversight rhythms are too slow for weekly AI shifts

Boards need governance systems designed for continuous movement — not periodic review. At Invictus Leader, we call this the Dynamic Foresight Loop: a framework built for nonlinear environments where static oversight becomes delayed oversight.

“Markets rarely announce the moment old models stop working. They simply punish delay.”

Ravi VS — Invictus Leader

The question is not whether AI belongs on the board agenda.

It does. Unquestionably.

The real question is whether boards are trying to govern a nonlinear force with linear habits. Whether they are mapping a transformed terrain with the tools of a prior era.

The organisations that respond early are often called lucky. They are not lucky. They were alert.